Deteksi Penyakit Mata Pada Citra Fundus Menggunakan Convolutional Neural Network (CNN)

DOI:

https://doi.org/10.33633/tc.v21i2.6162Keywords:

ocular disease, convolutional neural network (CNN), MobileNetV2, fundus image, image classificationAbstract

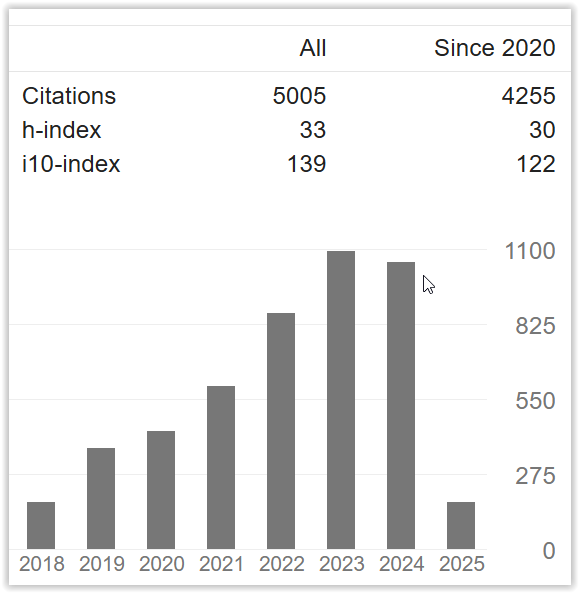

Pada tahun 2020, terdapat 1,1 milyar orang yang mengalami kehilangan penglihatan di seluruh dunia. Jumlah ini diproyeksikan akan terus bertambah hingga mencapai 1,76 milyar orang pada tahun 2050. Penyebab utama kebutaan untuk anak-anak dan remaja adalah penyakit mata, yang dapat dicegah apabila dilakukan deteksi dan penanganan lebih dini. Oleh sebab itu, pada penelitian ini diusulkan metode berbasis Convolutional Neural Network (CNN) untuk mendeteksi penyakit mata pada citra fundus. Metode yang diusulkan menggunakan metode transfer learning dengan arsitektur jaringan MobileNetV2 sebagai base model. Arsitektur head model yang diusulkan, yang terdiri dari lapisan global average pooling dan diikuti oleh 2 lapisan fully-connected, mampu memberikan akurasi yang paling tinggi dan efisiensi paling baik dibandingkan dengan arsitektur head model lainnya. Eksperimen pada dataset citra fundus yang terdiri dari 601 citra dengan berbagai macam penyakit mata menunjukkan bahwa metode yang diusulkan mampu memberikan performa yang baik dengan nilai akurasi sebesar 72%, precision sebesar 72%, recall sebesar 72%, dan F1-score sebesar 72%. Hasil eksperimen menunjukkan bahwa metode yang diusulkan dapat memberikan akurasi yang lebih tinggi dan lebih efisien dibandingkan dengan menggunakan arsitektur CNN lainnya, seperti ResNet50V2, InceptionV3, InceptionResNetV2, VGG16, dan VGG19.References

G. P. Kumasela, J. S. M. Saerang, and L. Rares, “Hubungan Waktu Penggunaan Laptop Dengan Keluhan Penglihatan Pada Mahasiswa Fakultas Kedokteran Universitas Sam Ratulangi,” J. e-Biomedik, vol. 1, no. 1, 2013, doi: 10.35790/ebm.1.1.2013.4361.

The International Agency for the Prevention of Blindness (IAPB), “Vision Atlas,” The International Agency for the Prevention of Blindness (IAPB), 2020. https://www.iapb.org/learn/vision-atlas/magnitude-and-projections/.

M. J. Burton et al., “The Lancet Global Health Commission on Global Eye Health: vision beyond 2020,” Lancet Glob. Heal., vol. 9, no. 4, pp. e489–e551, 2021, doi: 10.1016/S2214-109X(20)30488-5.

N. Gour, M. Tanveer, and P. Khanna, “Challenges for ocular disease identification in the era of artificial intelligence,” Neural Comput. Appl., vol. 5, 2022, doi: 10.1007/s00521-021-06770-5.

V. Gulshan et al., “Development and validation of a deep learning algorithm for detection of diabetic retinopathy in retinal fundus photographs,” JAMA - J. Am. Med. Assoc., vol. 316, no. 22, pp. 2402–2410, 2016, doi: 10.1001/jama.2016.17216.

A. Diaz-Pinto, S. Morales, V. Naranjo, T. Köhler, J. M. Mossi, and A. Navea, “CNNs for automatic glaucoma assessment using fundus images: An extensive validation,” Biomed. Eng. Online, vol. 18, no. 1, pp. 1–19, 2019, doi: 10.1186/s12938-019-0649-y.

L. Li, M. Xu, X. Wang, L. Jiang, and H. Liu, “Attention based glaucoma detection: A large-scale database and CNN model,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., vol. 2019-June, pp. 10563–10572, 2019, doi: 10.1109/CVPR.2019.01082.

M. N. Bajwa et al., “Correction to: Two-stage framework for optic disc localization and glaucoma classification in retinal fundus images using deep learning (BMC Medical Informatics and Decision Making (2019) 19 (136) DOI: 10.1186/s12911-019-0842-8),” BMC Med. Inform. Decis. Mak., vol. 19, no. 1, pp. 1–16, 2019, doi: 10.1186/s12911-019-0876-y.

A. K. Gangwar and V. Ravi, Diabetic Retinopathy Detection Using Transfer Learning and Deep Learning, vol. 1176. Springer Singapore, 2021.

R. C. Joshi, M. K. Dutta, P. Sikora, and M. Kiac, “Efficient Convolutional Neural Network Based Optic Disc Analysis Using Digital Fundus Images,” 2020 43rd Int. Conf. Telecommun. Signal Process. TSP 2020, pp. 533–536, 2020, doi: 10.1109/TSP49548.2020.9163560.

A. G. Howard et al., “MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications,” arXiv Prepr. arXiv1704.04861, 2017, [Online]. Available: http://arxiv.org/abs/1704.04861.

R. Indraswari, T. Kurita, A. Z. Arifin, N. Suciati, and E. R. Astuti, “Multi-projection deep learning network for segmentation of 3D medical images,” Pattern Recognit. Lett., vol. 125, pp. 791–797, Jul. 2019, doi: 10.1016/j.patrec.2019.08.003.

R. Indraswari, R. Rokhana, and W. Herulambang, “Melanoma image classification based on MobileNetV2 network,” Procedia Comput. Sci., vol. 197, pp. 198–207, 2021, doi: 10.1016/j.procs.2021.12.132.

A. F. Agarap, “Deep Learning using Rectified Linear Units (ReLU),” arXiv Prepr. arXiv1803.08375, no. 1, pp. 2–8, 2018, [Online]. Available: http://arxiv.org/abs/1803.08375.

R. Rokhana, W. Herulambang, and R. Indraswari, “Deep Convolutional Neural Network for Melanoma Image Classification,” IES 2020 - International Electronics Symposium: The Role of Autonomous and Intelligent Systems for Human Life and Comfort. pp. 481–486, 2020, doi: 10.1109/IES50839.2020.9231676.

K. Simonyan and A. Zisserman, “Very deep convolutional networks for large-scale image recognition,” 3rd Int. Conf. Learn. Represent. ICLR 2015 - Conf. Track Proc., pp. 1–14, 2015.

K. He, X. Zhang, S. Ren, and J. Sun, “Identity mappings in deep residual networks,” Lect. Notes Comput. Sci. (including Subser. Lect. Notes Artif. Intell. Lect. Notes Bioinformatics), vol. 9908 LNCS, pp. 630–645, 2016, doi: 10.1007/978-3-319-46493-0_38.

C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, and Z. Wojna, “Rethinking the Inception Architecture for Computer Vision,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., vol. 2016-Decem, pp. 2818–2826, 2016, doi: 10.1109/CVPR.2016.308.

C. Szegedy, S. Ioffe, V. Vanhoucke, and A. A. Alemi, “Inception-v4, inception-ResNet and the impact of residual connections on learning,” 31st AAAI Conf. Artif. Intell. AAAI 2017, pp. 4278–4284, 2017.

M. Sandler, A. Howard, M. Zhu, A. Zhmoginov, and L. C. Chen, “MobileNetV2: Inverted Residuals and Linear Bottlenecks,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., pp. 4510–4520, 2018, doi: 10.1109/CVPR.2018.00474.

J. Deng, W. Dong, R. Socher, L. J. Li, K. Li, and L. Fei-Fei, “Imagenet: A large-scale hierarchical image database,” in 2009 IEEE conference on computer vision and pattern recognition, 2009, pp. 248–255.

D. P. Kingma and J. L. Ba, “Adam: A method for stochastic optimization,” 3rd Int. Conf. Learn. Represent. ICLR 2015 - Conf. Track Proc., pp. 1–15, 2015.

R. Rokhana, W. Herulambang, and R. Indraswari, “Multi-Class Image Classification Based on MobileNetV2 for Detecting the Proper Use of Face Mask,” Int. Electron. Symp. 2021 Wirel. Technol. Intell. Syst. Better Hum. Lives, IES 2021 - Proc., pp. 636–641, 2021, doi: 10.1109/IES53407.2021.9594022.

Downloads

Published

Issue

Section

License

Copyright (c) 2022 Rarasmaya Indraswari, Wiwiet Herulambang, Rika Rokhana

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

License Terms

All articles published in Techno.COM Journal are licensed under the Creative Commons Attribution-NonCommercial 4.0 International (CC BY-NC 4.0). This means:

1. Attribution

Readers and users are free to:

-

Share – Copy and redistribute the material in any medium or format.

-

Adapt – Remix, transform, and build upon the material.

As long as proper credit is given to the original work by citing the author(s) and the journal.

2. Non-Commercial Use

-

The material cannot be used for commercial purposes.

-

Commercial use includes selling the content, using it in commercial advertising, or integrating it into products/services for profit.

3. Rights of Authors

-

Authors retain copyright and grant Techno.COM Journal the right to publish the article.

-

Authors can distribute their work (e.g., in institutional repositories or personal websites) with proper acknowledgment of the journal.

4. No Additional Restrictions

-

The journal cannot apply legal terms or technological measures that restrict others from using the material in ways allowed by the license.

5. Disclaimer

-

The journal is not responsible for how the published content is used by third parties.

-

The opinions expressed in the articles are solely those of the authors.

For more details, visit the Creative Commons License Page:

? https://creativecommons.org/licenses/by-nc/4.0/